A hybrid deep learning model for robust and data-efficient lithium-ion battery remaining useful life prediction

Abstract

Lithium-ion batteries are extensively utilized in applications like new energy vehicles and aerospace owing to their high energy density and safety, but their service life diminishes due to irreversible capacity degradation from repeated charge-discharge cycles, making accurate remaining useful life (RUL) prediction critical for reliability and operational safety. Current data-driven methods often struggle with long-range dependencies, noise from capacity regeneration, and efficient data utilization. To address these challenges, this study introduces a novel hybrid neural network architecture that integrates complementary ensemble empirical mode decomposition with adaptive noise (CEEMDAN) for denoising preprocessing with a Transformer-convolutional neural network (CNN)-bidirectional gated recurrent unit (BiGRU) model. The framework employs health indicators extracted from voltage and current profiles as inputs, where CEEMDAN mitigates interference effects, the Transformer captures global degradation trends via self-attention mechanisms, the CNN extracts localized short-term features, and the bidirectional GRU models temporal dependencies bidirectionally. Experimental validation on National Aeronautics and Space Administration (NASA) and our own test datasets demonstrates that the proposed approach significantly outperforms other models in key metrics such as mean absolute error (MAE), root mean square error (RMSE) and mean absolute percentage error (MAPE), achieving high accuracy even with minimal training data (e.g., only 40% of cycles). Furthermore, cross-dataset validation demonstrates robust generalization of our model, achieving MAPE below 3.5% when transferring between NASA and our battery data without retraining. This hybrid model offers a robust, data-efficient solution for enhancing RUL prediction in practical battery management systems, with strong generalization across diverse battery types.

Keywords

INTRODUCTION

Owing to their long storage life, pollution-free, high-energy density, and light weight, lithium-ion batteries find widespread applications across various domains, including new energy vehicles, aerospace, and mobile communication systems[1-3]. However, as the number of charge-discharge cycles increases progressively, irreversible chemical reactions, such as oxidation decomposition, occur within the battery. This results in the loss of electrode active materials and a decrease in available capacity, thereby diminishing the service life of the battery[4-7]. The remaining useful life (RUL) of a lithium-ion battery refers to the number of charge-discharge cycles that can be sustained before it is degraded from the current state to the end of life (EOL) threshold. Since RUL is a critical metric for characterizing lithium-ion battery health and performance, accurate RUL prediction is vital for enhancing reliability and operational safety[8-10]. In this regard, current work mainly focuses on two major categories of RUL prediction approaches: model-based methods and data-driven methods.

Common model-based approaches include electrochemical models (EMs) and equivalent circuit models (ECMs), both of which aim to establish physical frameworks describing battery degradation trends[11-14]. Among them, EMs primarily focus on the internal chemical reaction mechanisms within different battery cells[13,15], whereas ECMs typically utilize various filtering algorithms to process resistance or capacitance data[11,12,14]. However, due to the difficulty in acquiring precise model parameters, these approaches typically exhibit limited generalization capability and incur notably high computational costs.

From the data-driven perspective, machine learning developments demonstrate considerable promise for RUL prediction. Unlike traditional modelling approaches, data-driven methods circumvent the need to account for the internal mechanisms of lithium-ion batteries. Instead, they analyze the historical operational data of these batteries to uncover the underlying patterns of battery degradation. Among which, recent studies have reported methods for predicting RUL based on physics-informed neural networks (PINNs)[16-19]. Such approaches incorporate physical laws governing battery degradation, thereby enabling RUL prediction with only a small amount of training data. However, these methods are typically designed for a single battery chemistry. To enable direct application across diverse battery chemistries, our focus is on neural network methods that can accurately predict RUL using only externally measurable data. Commonly employed data-driven architectures encompass convolutional neural networks (CNN)[20-23] and recurrent neural networks (RNN)[24-26], among others[27-29]. CNN, initially developed for image processing, possess robust self-adaptive feature extraction capabilities and demonstrate significant advantages in extracting localized features from data[30,31], while RNNs are particularly effective in modeling sequential data and have found broad applications within the RUL prediction domain. However, original RNN architectures suffer from issues with long-term dependencies and gradient vanishing. To address these limitations, researchers have developed enhanced RNN model such as gated recurrent units (GRU)[32] and long short-term memory (LSTM) networks[33-35]. Subsequently, to more effectively capture contextual relationships across sequential data, bidirectional GRU (BiGRU) and bidirectional LSTM (BiLSTM) networks were introduced. Recently, to address long-term dependencies limitations inherent in traditional deep learning models, researchers have introduced the attention mechanism (AM)[22,36,37]. AM is a technique specifically designed for modeling dependencies within sequences. Building on this capability, researchers have developed hybrid neural network architectures that integrate AM with CNNs and RNNs[20,22,37,38]. Furthermore, building upon the AM framework, Vaswani et al. from Google Brain proposed a novel architecture called Transformer[39,40]. Due to its unique structure, exceptional ability to capture long-range dependencies, and efficient parallel computation, the Transformer model has achieved remarkable success in natural language processing and is steadily gaining traction in time series forecasting domains[38,41-43]. Moreover, data preprocessing techniques are widely employed to address phenomena like capacity regeneration during battery degradation and measurement noise induced by random load fluctuations. These techniques include, but are not limited to, Empirical Mode Decomposition (EMD)[44], Variational Mode Decomposition (VMD)[33], Variational Filtering (VF)[45], and Particle Filtering (PF)[46]. The combination of data-driven methods with data preprocessing techniques offers a potent approach to further improve the accuracy of RUL predictions[33,38].

While data-driven methods have advanced battery RUL prediction, their practical application faces three interconnected challenges: (1) RNN-based models often fail to capture long-range dependencies in degradation data due to gradient issues[47]; (2) Sensor noise and capacity regeneration can mislead predictions, yet existing preprocessing techniques are not well integrated with deep learning models[20]; (3) Many models require large training datasets - often scarce in practice - and struggle to generalize across different battery types.

To address the aforementioned challenges holistically, this study proposes a novel hybrid framework that synergistically integrates advanced data preprocessing with a multi deep learning architecture. Unlike previous works that may focus on a single aspect, our work is designed to make concurrent breakthroughs in all three areas. The specific contributions and how they map to the challenges are as follows: To capture long-range dependencies, we leverage the Transformer module, whose self-attention mechanism is inherently powerful at modeling global contextual information across the entire battery lifecycle; To mitigate noise interference, we incorporate complementary ensemble empirical mode decomposition with adaptive noise (CEEMDAN) as a robust preprocessing step to denoise the health indicators and disentangle the capacity regeneration phenomenon; To extract local features and model complex temporal dynamics for improved data efficiency and robustness, we integrate convolutional neural networks (CNN) for local pattern extraction and a bidirectional gated recurrent unit (BiGRU) for bidirectional temporal dependency modeling. The CNN-BiGRU structure ensures that both short-term fluctuations and sequential trends are captured effectively, enhancing performance even with limited training data. The synergistic integration of these components enables unified modeling of short-term variations and global degradation patterns, significantly enhancing prediction accuracy, robustness, and deployment feasibility in practical engineering applications.

In the present work, experimental validation employs battery datasets from National Aeronautics and Space Administration (NASA) and lithium nickel cobalt manganese oxide (NCM) battery datasets from China University of Geosciences (CUG). Comparative analysis against traditional models (LSTM, GRU, BiGRU, and BiLSTM networks) demonstrates superior prediction accuracy without substantial computational overhead. Furthermore, cross-dataset validation demonstrates robust generalization capabilities of the proposed model across diverse battery types, including NASA and NCM types.

MATERIALS AND METHODS

To present the methodology more clearly, we organize the description into three parts: (1) data preprocessing, (2) model configuration, and (3) implementation details.

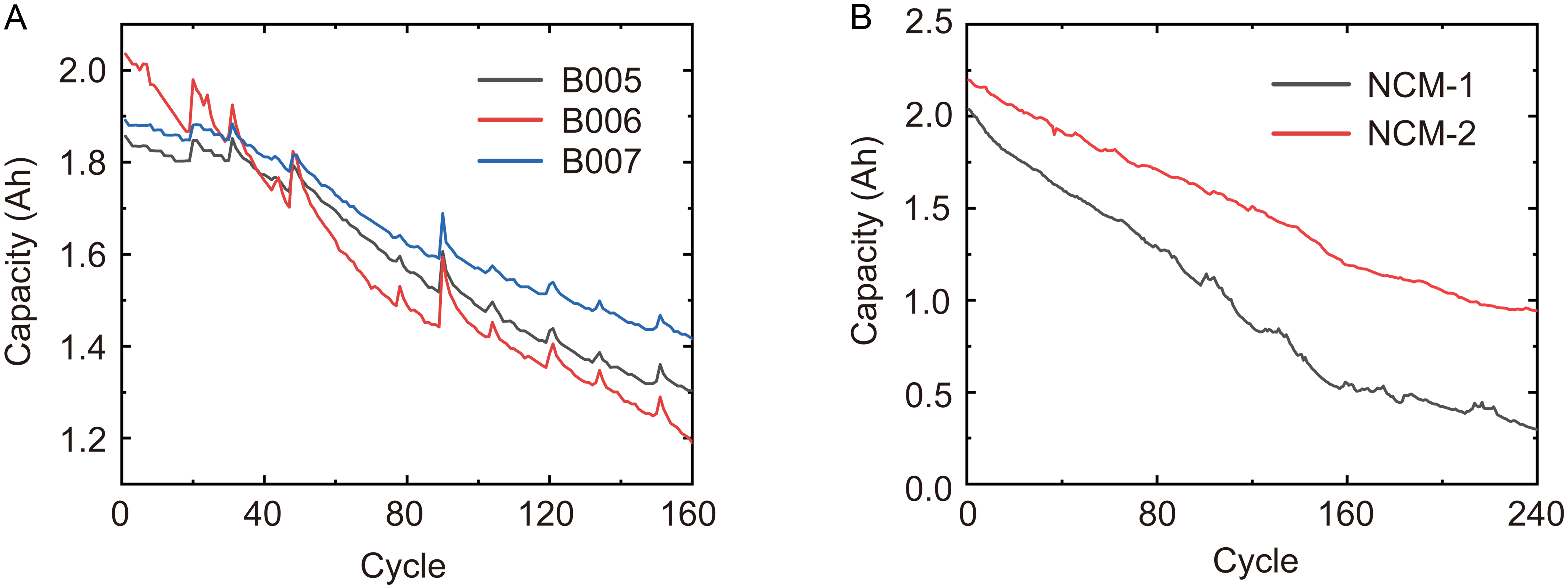

Data processing

In data preprocessing, health indicators (HIs) are first extracted from raw battery operational data. The validity of these HIs is rigorously evaluated to establish strong correlation with battery degradation trajectories. Subsequent application of CEEMDAN decomposes and reconstructs the HI time series, effectively mitigating noise interference from capacity regeneration and electromagnetic disturbances. This study utilizes two distinct battery datasets: the NASA-B005 cell and the China University of Geosciences NCM-1 cell. Their representative capacity degradation curves are presented in Figure 1. Using the NASA battery dataset as an example, we first detail the HIs extraction methodology.

Figure 1. Representative battery capacity degradation curves. (A) NASA battery dataset; (B) NCM battery dataset. NASA: National Aeronautics and Space Administration; NCM: lithium nickel cobalt manganese oxide.

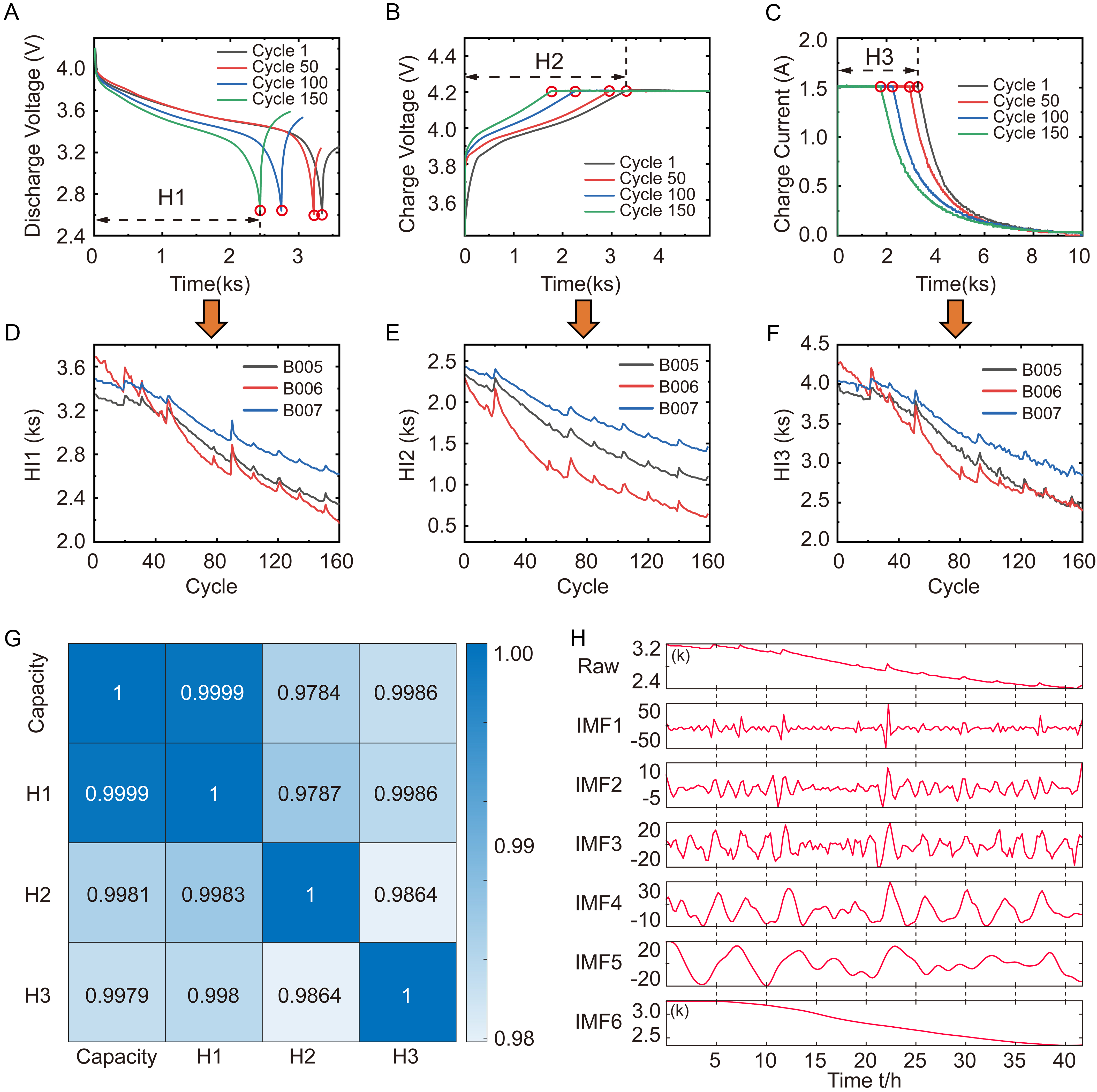

Beyond direct capacity utilization, selecting appropriate HIs for indirect capacity prediction offers substantial cost savings in RUL prediction. Consequently, this study extracts indirect HIs from readily monitorable voltage and current profiles during charge/discharge cycles to assess LiB capacity degradation, as shown in Figure 2A-C for discharge voltage profiles, charge voltage profiles, and charge current profiles at uniform cycle intervals for NASA-B005 cells. These profiles reveal progressive deterioration of voltage response and current characteristics with cycling, indicating underlying material/structural alterations. Three temporally correlated features - effectively characterizing LiBs health - are identified, including H1 the discharge voltage decay duration, H2 the charge voltage rise duration, and H3 constant-current charge duration. The cycle-by-cycle evolution of these HIs in Figure 2D-F exhibits significant correspondence with the capacity profiles shown in Figure 1A. To quantitatively validate the degradation relevance, linear (Pearson) and monotonic (Spearman) correlations between each HI and RUL are analyzed using their respective coefficients (for details see Supplementary Note 1). As a result, Figure 2G and Supplementary Figure 1 illustrates correlation analysis results for NASA-B005. Both coefficients demonstrate strong HI-RUL relationships, confirming the selected HIs from battery data as validated inputs for subsequent prediction, with capacity designated as the output variable.

Figure 2. HIs extraction of NASA-B005 battery data. (A) Discharge voltage versus time curve; (B) Charge voltage versus time; (C) Charge current versus time; (D) The extracted H1 represents discharge voltage decay duration; (E) H2 is charge voltage rise duration; (F) H3 represents constant-current charge duration; (G) show the Spearman correlation analysis diagram; (H) The CEEMDAN decomposition curves for the extracted H1 for NASA-B005, where h = 4 is sampling interval. HIs: Health indicators; HI: health indicator; NASA: National Aeronautics and Space Administration; CEEMDAN: complementary ensemble empirical mode decomposition with adaptive noise; IMF: intrinsic mode function.

Following the same methodology, we extract HIs from the NCM-1 battery data. Supplementary Figure 2A and B presents NCM-1’s discharge and charge voltage profiles sampled at uniform cycle intervals. Given the constant-current charge/discharge protocol employed for NCM cells, only H1 (Discharge voltage decay duration) and H2 (Charge voltage rise duration) are utilized as HIs. The cycle-by-cycle evolution of these HIs is depicted in Supplementary Figure 2C and D, while Supplementary Figure 2E and F respectively displays their corresponding Pearson and Spearman correlation coefficients. It is observed that both HIs exhibit strong correlations with the capacity degradation profile shown in Figure 1B, confirming their suitability as prognostic inputs.

To mitigate noise interference from capacity regeneration, electromagnetic disturbances, and varying charge/discharge rates, this study employs CEEMDAN for input data preprocessing. CEEMDAN resolves mode mixing through adaptive noise injection, facilitating effective separation of distinct modes. Additionally, it reduces pseudo-modes via ensemble averaging, smooths injected noise, and retains only significant components. After a series of decomposition processes (for details see Supplementary Note 2), the HI sequence H(t) decomposes into k intrinsic mode functions (IMFs) and a residual R(t)[20]:

Figure 2H and Supplementary Figure 3 illustrate the decomposition results of H1 sequences for NASA-B005 and NCM-1 batteries. Eventually, HI reconstruction via autocorrelation coefficients of IMFs yields denoised inputs for subsequent machine learning models.

Model training configuration

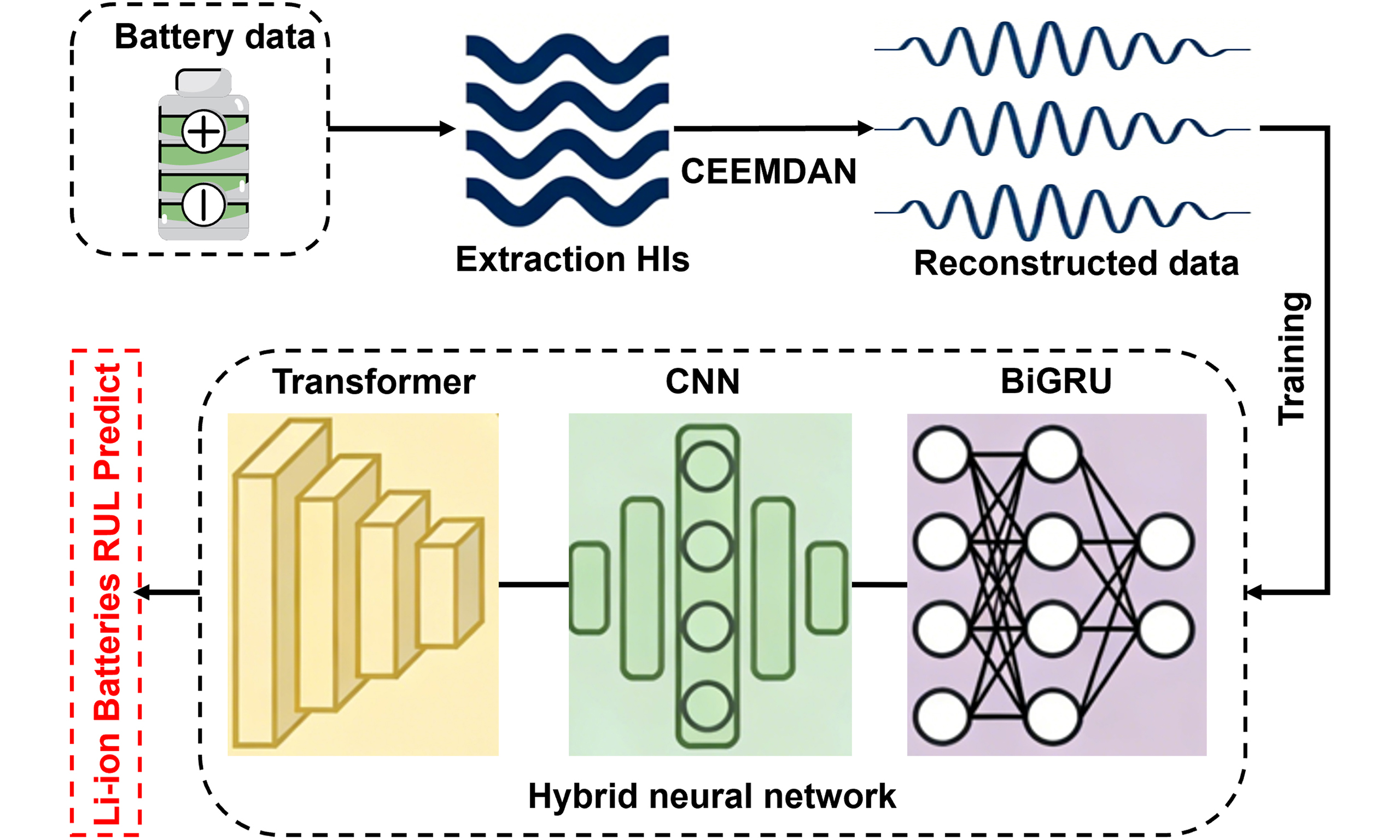

This study adopts an indirect prediction approach, with the lithium-ion battery RUL prediction framework illustrated in Figure 3. For model training, we propose a hybrid Transformer-CNN-BiGRU architecture for RUL estimation. Within this integrated framework, each component provides complementary capabilities. The CNN extracts localized transient patterns, the Transformer resolves long-range dependencies, and the BiGRU captures bidirectional temporal contexts, collectively enabling robust and accurate RUL prediction. The RUL prediction module employs the initial 40%, 50%, and 60% of capacity data as training sets to investigate model sensitivity to data volume. Reconstructed HI sequences serve as model inputs, with corresponding capacity measurements as outputs. The Transformer-CNN-BiGRU network undergoes pretraining using these varying-length datasets before performing real-time RUL prediction on the remaining data. Prediction quality is quantified through established metrics - mean absolute error (MAE), root mean square error (RMSE), coefficient of determination (R2), and mean absolute percentage error (MAPE). Implementation details for each module are elaborated in subsequent sections.

Figure 3. The architecture of our battery RUL prediction framework. RUL: Remaining useful life; NASA: National Aeronautics and Space Administration; NCM: nickel cobalt manganese oxide; HIs: health indicators; HI: health indicator; IMFs: intrinsic mode functions; IMF: intrinsic mode function; CEEMD: complementary ensemble empirical mode decomposition; CNN: convolutional neural network; BiGRU: bidirectional gated recurrent unit; GRU: gated recurrent unit; BP: back propagation; LSTM: long short-term memory; PAW: present article’s work; MAE: mean absolute error; RMSE: root mean square error; R2: coefficient of determination; MAPE: mean absolute percentage error.

Transformer-CNN-BiGRU prediction model

This section details the hybrid neural network model, Transformer-CNN-BiGRU, adopted in the present work. This model captures short-term local characteristics through CNN, processes long-range dependencies via Transformer, and models bidirectional temporal information using BiGRU, thereby generating a more robust and accurate predictor for battery health states. Overall, the four modules - CEEMDAN, Transformer, CNN, and BiGRU - work collaboratively as illustrated in Figure 4A. Their respective tasks and core functions are outlined below:

Figure 4. Transformer-CNN-BiGRU Model structure. (A) Model framework. Schematic diagram of (B) CNN structure, (C) Transformer structure, (D) BiGRU structure. CNN: Convolutional neural network; BiGRU: bidirectional gated recurrent unit; CEEMDAN: complementary ensemble empirical mode decomposition with adaptive noise; 1D: one-dimensional; IMF: intrinsic mode function; GRU: gated recurrent unit; CNN: convolutional neural network; HI: health indicator.

(1) CEEMDAN is responsible for signal decoupling and denoising. Its key role is to decompose the original multicomponent signal into several IMFs, each corresponding to features at a specific frequency scale, thereby isolating a purified degradation signature. It takes the raw battery health indicator (HI) degradation curve as input and outputs k IMFs, each being a one-dimensional time series of the same length as the original signal.

(2) CNN performs spatio-temporal feature extraction. Its core function is to capture local frequency-domain features through convolution and, by sliding the convolutional window along the time dimension, to extract the temporal evolution patterns of battery degradation. It receives the denoised HI sequence (IMF time-frequency information) as input, processes each IMF sequence in parallel, uses past time steps (the input window) to predict the next time step, and outputs data after convolution and pooling.

(3) Transformer aims to extract global features and long-term dependencies from the sequence. It works by enabling any time point in the sequence to interact directly with and be weighted against all other time points. Positional encoding adds order information to the input sequence, allowing the model to understand the sequential arrangement of data points. Its input is the IMF sequence containing local features output by the CNN, and its output is a sequence that encodes long-range dependencies.

(4) BiGRU is designed to capture short-term temporal dynamics from both forward and backward directions. It operates through reset and update gates to effectively model short-term dependencies in the sequence and mitigate the vanishing gradient problem. It takes the Transformer’s output as input and produces a prediction for each IMF component.

For the k VMD components, k independent Transformer-BiGRU models are trained. The prediction results for each component on the training and test sets are obtained, and the predictions from all k components are summed directly to reconstruct the final capacity prediction sequence.

To extract local spatial features from input data, we employ CNN to apply convolutional filters to the input time series. The primary CNN structure consists of an input layer, convolutional layer, pooling layer, fully connected layer, and output layer, as illustrated in Figure 4B. For 1D (one-dimensional) convolution, the feature extraction operation is defined as[22]:

where f(·) denotes the convolutional filter,

where

The Transformer architecture is a method for processing long sequential data based on self-attention mechanisms. By computing pairwise relationships across all timesteps, it enables the model to capture global dependencies. Its structure is illustrated in Figure 4C. For time series forecasting, the Transformer utilizes multi-head attention layers to compute multiple self-attention heads in parallel, concatenating their outputs for the final result. Using linearly projected Query (Q), Key (K), and Value (V) matrices from the input sequence, attention weights are computed via the attention function (for details see Supplementary Note 3). This process allows the model to learn dependencies between distant time steps.

For the last module, GRU represents a streamlined variant of LSTM, incorporating only a reset gate and an update gate, as shown in Figure 4D. While achieving comparable prediction accuracy to LSTM, GRU’s optimized structure features fewer trainable parameters and accelerated convergence speed. The computational flow within each GRU cell see Supplementary Note 4. Furthermore, BiGRU captures dependencies between sequence endpoints by processing information bidirectionally forward (start-to-end) and backward (end-to-start). The Transformer output features are processed by a BiGRU layer to model bidirectional temporal dependencies[20]:

The backward GRU Layer processes the reversed sequence:

and the final output ht at each timestep t integrates both directions:

where α, β are weight coefficients controlling contributions of forward/backward states, and ct is the bias term.

RESULTS AND DISCUSSION

This section focuses on experimental validation of the proposed hybrid model, structured through four pillars: dataset and experimental setup, validation of the method, and comparative analysis. The experiments aim to demonstrate significant improvements in reliability and prediction accuracy over standalone architectures and baseline models (GRU, LSTM, CNN-GRU).

Dataset

Two distinct battery degradation datasets were utilized, including NASA Battery Prognostics Repository (B005 cell) and laboratory-tested NCM batteries obtained at China University of Geosciences. The NCM battery testing protocol maintained ambient temperature at 25 ± 1 °C within a 2.7-4.3 V voltage window under half-cell configuration, implementing formation cycling at 0.1C for 5 cycles followed by 300 aging cycles at 1C, with electrode mass loading standardized at 12.8 mg/cm2 on 15 mm diameter substrates. We will open source this data for researchers’ reference.

Experimental setting

The proposed lithium battery remaining useful life prediction model employs a hybrid Transformer-CNN-BiGRU architecture to capture both long-term dependencies and sequential degradation patterns. The specific experimental parameters are listed in Supplementary Table 1. Specifically, the Transformer module processes input sequences using a historical window of two cycles, with positional encoding supporting sequences up to 512 steps. It incorporates four parallel attention heads, each projecting inputs into 32-dimensional key spaces, and utilizes causal masking in the initial attention layer to enforce temporal constraints. The bidirectional GRU component consists of asymmetric forward and backward processing paths, with the forward GRU containing six hidden units and the reverse GRU containing ten hidden units. These bidirectional features are fused through concatenation before final regression output. The model processes normalized capacity data scaled to the [0,1] range using the Adam optimizer with an initial learning rate of 0.001, which decays by a factor of 0.1 after 50 epochs. Training implemented a batch size of 256 samples with L2 regularization (λ = 0.001) and gradient clipping at a threshold of 10 to ensure stable convergence. This configuration leverages the Transformer’s strength in capturing complex temporal relationships across cycles while utilizing the BiGRU’s capacity to model bidirectional degradation dynamics from limited historical data points. Moreover, to quantitative evaluate our method. we adopt three statistical metrics - MAE, RMSE, and MAPE, for details see Supplementary Note 5.

Model validation

To evaluate the individual contributions of each component module within the proposed Transformer-CNN-BiGRU hybrid model, combined with CEEMDAN data preprocessing, to the predictive performance, four comparative schemes were designed as presented in Table 1. Here, S* denotes the complete proposed scheme, while S1, S2, and S3 represent the prediction schemes where the CNN module, the CEEMDAN preprocessing, and the Transformer module were removed, respectively. These four schemes were applied to both NASA and NCM batteries. The corresponding prediction results and evaluation metrics are illustrated in Figure 5A-D. Specifically, Figure 5A and B displays the results for the NASA battery, and Figure 5C and D shows the results for the NCM battery. For both experimental datasets, the first 50% of the data was utilized as the training set during the train-test split.

Figure 5. Validation of RUL prediction performance. (A) RUL prediction results and (B) Evaluation metrics for NASA-B005 battery cell using different schemes; (C) RUL prediction results for NCM-1 battery cell using different schemes; (D) Evaluation metrics for NCM-1 prediction results; (E) Predicted versus actual RUL curves for the NCM-1 and NCM-2 batteries using the model trained exclusively on NASA-B005 data; (F) Generalization results on NASA-B005, B006, and B007 batteries from the model trained on NCM-1 data. RUL: Remaining useful life; NASA: National Aeronautics and Space Administration; NCM: nickel cobalt manganese; MAE: mean absolute error; RMSE: root mean square error; MAPE: mean absolute percentage error..

Method statement

| Symbol | Method |

| S* | CEEMDAN+Transformer-CNN-BiGRU |

| S1 | CEEMDAN+Transformer-BiGRU |

| S2 | CEEMDAN+CNN-BiGRU |

| S3 | Transformer-CNN-BiGRU |

As evident from the Figure 5A-D, S* (the full model) achieved the best performance on both battery datasets, with specific error metrics provided in Supplementary Table 2. It achieved the lowest MAPE on the NASA dataset (approximately 1.05%) and an MAPE below 3.3% on the NCM dataset. The predicted curve closely follows the true values, remaining stable even during capacity regeneration phases (e.g., curve fluctuations), indicating that all modules work together effectively to capture short-term fluctuations, long-term trends, and noise robustness. S1 (removing CNN)’s performance declined noticeably, with significantly higher MAE and MAPE than S*. For example, on the NASA data [Figure 5A and B], the prediction curve shows smoothed deviations in the mid-term and fails to track local capacity fluctuations accurately. This highlights the critical role of the CNN: it extracts local features (e.g., short-term changes in voltage/current) and provides essential context for subsequent modules. Without the CNN, the Transformer and BiGRU lack local contextual information, leading to reduced sensitivity to transient variations. S2 (removing Transformer) performed the worst, with the prediction curve deviating substantially from the true values toward the end of the cycle [Figure 5A and C], and the MAPE on the NASA data increased sharply. This indicates that the Transformer is central to handling long-range dependencies: its self-attention mechanism captures the global degradation trend, preventing the model from overfitting to short-term noise. Without the Transformer, the model (CNN-BiGRU only) overfits locally and fails to model the slow degradation over the full life cycle effectively. The ablation experiments in Figure 5A-D confirm that the Transformer, CNN, BiGRU, and CEEMDAN modules interact through tightly coupled sequential collaboration, achieving a clear division of labor and mutual reinforcement. The superiority of the full model (S*) stems from the complementary roles of the modules: CNN-extracted local features support the global analysis of the Transformer, the Transformer’s output improves the bidirectional modeling of the BiGRU, and CEEMDAN pre-processing supplies a clean input to the entire pipeline.

Moreover, the denoising efficacy of CEEMDAN and its parameter sensitivity are pivotal to the model’s performance. Supplementary Note 6 provides a comprehensive analysis, showing that the prediction error (MAE) reaches a minimum at a noise amplitude of 0.15 (see Supplementary Figure 4A and Supplementary Table 3) and stabilizes with approximately six IMFs (see Supplementary Figure 4B and Supplementary Table 4). This parameter optimization directly contributes to the high accuracy observed in our main results. Moreover, as shown in Supplementary Figure 4C and the associated error metrics provided in Supplementary Table 5, comparative studies with VMD and wavelet-based denoising demonstrate that CEEMDAN more effectively captures the underlying capacity regeneration trends, which is critical for reducing error propagation in the prognostic model.

To validate the generalization capability of the proposed model across diverse battery types, we conducted a cross-dataset experiments. As illustrated in Figure 5E, the model trained exclusively on the entire NASA-B005 dataset was deployed to predict RUL for the NCM cells, while Figure 5F demonstrates predictions on NASA-B005, B006, and B007 batteries using a model trained solely on NCM-1 data. Furthermore, the evaluation metrics [Supplementary Table 6] reveal that predicted capacity curves exhibit remarkable consistency with ground-truth measurements across all test cases. Specifically, MAE values remained below 0.04 and MAPE under 3.5% despite significant differences in battery materials and cycling protocols. These results conclusively demonstrate the model’s capacity for robust cross-dataset RUL predictions, highlighting its potential for deployment in practical battery management systems with diverse cell types.

Furthermore, to validate the accuracy of health indicator (HI) extraction, we conducted a comparative experiment under the same model configuration, using either the extracted HI or the direct capacity as input. The corresponding prediction results are shown in Supplementary Figure 5, and the associated error metrics are provided in Supplementary Table 7. It can be observed that the results obtained using HI as an indirect input exhibit only minimal discrepancy compared to those using the direct capacity values. This small deviation can be attributed to the strong correlation between the HI and the actual capacity, as supported by the high Spearman and Pearson correlation coefficients discussed earlier. Therefore, it can be concluded that the use of HI as an indirect metric adequately meets the requirement for high-precision prediction.

Comparative analysis

To further validate the performance improvement of the proposed hybrid model over standalone architectures and baseline models, comparative studies were conducted against LSTM, GRU, BiLSTM, and CNN-BiGRU. As depicted in Figure 6, these five models were evaluated using NASA-B005 data, with training sets comprising the initial 40%, 50%, and 60% of the data, respectively. This allowed for an assessment of model performance relative to the amount of available training data.

Figure 6. The RUL prediction results for the NASA-B005 battery. (A, C, E) respectively shows the prediction results using the first 40%, 50%, and 60% of the data as the training set, while (B, D, F) displays the corresponding metric analyses. RUL: Remaining useful life; NASA: National Aeronautics and Space Administration; CNN: convolutional neural network; BiGRU: bidirectional gated recurrent unit; GRU: gated recurrent unit; BiLSTM: bidirectional LSTM; LSTM: long short-term memory; MAE: mean absolute error; RMSE: root mean square error; MAPE: mean absolute percentage error.

Figure 6A, C and E demonstrates that the predicted capacity curves of all five models progressively converge towards the true curve as the volume of training data increases. Notably, the proposed hybrid model exhibited superior predictive performance even with only 40% training data. As Figure 6B reveals, its MAE, RMSE, and MAPE values were substantially lower than those of the four baseline models across all training data fractions. Besides, among the standalone models, performance was unsatisfactory for LSTM, GRU, and BiLSTM. Except for our hybrid model, only the CNN-BiGRU model achieved marginally satisfactory performance when trained on 60% of the data [Figure 6E and F]. Supplementary Table 8 presents detailed error metrics, which further support the aforementioned results. An identical comparative analysis was performed on the NCM-1 battery dataset, with results presented in Supplementary Figure 6. The proposed hybrid model consistently maintained excellent predictive performance on this dataset, unambiguously demonstrating its generalization capability.

These results collectively indicate that the proposed hybrid model achieves high-precision predictions with only a small amount of training data. Compared to the baseline models, the hybrid model offers a significant enhancement in prediction accuracy while exhibiting robust generalization capability.

CONCLUSIONS

In summary, this work presents a highly effective data-driven framework for battery RUL prognostics. The innovative integration of CEEMDAN denoising with a Transformer-CNN-BiGRU architecture successfully addresses key challenges in capturing both long-term degradation trends and short-term capacity variations. Besides, by leveraging readily monitorable voltage and current data to derive predictive HIs, the framework offers a cost-effective alternative to direct capacity measurement, enhancing feasibility for deployment in battery management systems. Critically, cross-dataset validation demonstrates robust generalization, achieving MAPE below 3.5% when transferring between NASA and NCM batteries without retraining. Moreover, the model’s ability to deliver reliable long-term RUL forecasts with limited early-cycle data is particularly valuable for optimizing battery utilization, mitigating safety risks associated with unexpected failures. Future work will focus on validating the framework across an even broader range of battery types and operating scenarios, exploring adaptive mechanisms for continuous online learning within battery management system constraints, and further optimizing the architecture for embedded implementation.

DECLARATIONS

Authors’ contributions

Data curation (lead); formal analysis (lead); investigation (lead); methodology (lead); visualization (lead); writing - original draft (lead); writing - review and editing (lead): Xu, M.

Data curation; formal analysis; investigation: Xing, S.; Hong, J.; Tian, X.; Huang, B.; Jin, H.

Conceptualization (lead); supervision (lead); visualization; writing - original draft (lead); writing - review and editing (lead): Li, J.

Availability of data and materials

Part of the data in this study is sourced from the NASA Open Science Data Repository. NCM battery data used in this study are available on GitHub website: https://github.com/MTT-ginger/NCM-battery-dataset. The machine-learning codes developed for the accelerated framework are available on GitHub website: https://github.com/MTT-ginger/CEEMDAN-CNN-Transformer-BiGRU.

AI and AI-assisted tools statement

Not applicable.

Financial support and sponsorship

This work was supported by the National Natural Science Foundation of China (Grant Nos. 12192213, 92466201, and 12202155), the Guangdong Basic and Applied Basic Research Foundation (Grant No. 2023B1515130003), the Financial Support for Outstanding Talents Training Fund in Shenzhen, the Guangdong Provincial Key Laboratory Program (Grant No. 2021B1212040001), the China Postdoctoral Science Foundation (Grant No. 2025M771862).

Conflicts of interest

All authors declared that there are no conflicts of interest.

Ethical approval and consent to participate

Not applicable.

Consent for publication

Not applicable.

Copyright

© The Author(s) 2026.

Supplementary Materials

REFERENCES

1. Goodenough, J. B.; Kim, Y. Challenges for rechargeable Li batteries. Chem. Mater. 2010, 22, 587-603.

2. Huang, J.; Boles, S. T.; Tarascon, J. Sensing as the key to battery lifetime and sustainability. Nat. Sustain. 2022, 5, 194-204.

3. Dembitskiy, A. D.; Humonen, I. S.; Eremin, R. A.; Aksyonov, D. A.; Fedotov, S. S.; Budennyy, S. A. Benchmarking machine learning models for predicting lithium ion migration. npj. Comput. Mater. 2025, 11, 1571.

4. Attia, P. M.; Grover, A.; Jin, N.; et al. Closed-loop optimization of fast-charging protocols for batteries with machine learning. Nature 2020, 578, 397-402.

5. Downie, L. E.; Hyatt, S. R.; Wright, A. T. B.; Dahn, J. R. Determination of the time dependent parasitic heat flow in lithium ion cells using isothermal microcalorimetry. J. Phys. Chem. C. 2014, 118, 29533-41.

6. Severson, K. A.; Attia, P. M.; Jin, N.; et al. Data-driven prediction of battery cycle life before capacity degradation. Nat. Energy. 2019, 4, 383-91.

7. Tian, H.; Qin, P.; Li, K.; Zhao, Z. A review of the state of health for lithium-ion batteries: research status and suggestions. J. Clean. Prod. 2020, 261, 120813.

8. Lin, C.; Cabrera, J.; Yang, F.; Ling, M.; Tsui, K.; Bae, S. Battery state of health modeling and remaining useful life prediction through time series model. Appl. Energy. 2020, 275, 115338.

9. Shu, X.; Yang, W.; Wei, K.; et al. Research on capacity characteristics and prediction method of electric vehicle lithium-ion batteries under time-varying operating conditions. J. Energy. Storage. 2023, 58, 106334.

10. Wang, Y. Application-oriented design of machine learning paradigms for battery science. npj. Comput. Mater. 2025, 11, 1575.

11. Yang, L.; Cai, Y.; Yang, Y.; Deng, Z. Supervisory long-term prediction of state of available power for lithium-ion batteries in electric vehicles. Appl. Energy. 2020, 257, 114006.

12. Ramadesigan, V.; Northrop, P. W. C.; De, S.; Santhanagopalan, S.; Braatz, R. D.; Subramanian, V. R. Modeling and simulation of lithium-ion batteries from a systems engineering perspective. J. Electrochem. Soc. 2012, 159, R31-45.

13. Deng, Z.; Yang, L.; Deng, H.; Cai, Y.; Li, D. Polynomial approximation pseudo-two-dimensional battery model for online application in embedded battery management system. Energy 2018, 142, 838-50.

14. Karimi, D.; Behi, H.; Van, Mierlo. J.; Berecibar, M. Equivalent circuit model for high-power lithium-ion batteries under high current rates, wide temperature range, and various state of charges. Batteries 2023, 9, 101.

15. Larsson, C.; Larsson, F.; Xu, J.; Runesson, K.; Asp, L. E. Electro-chemo-mechanical modelling of structural battery composite full cells. npj. Comput. Mater. 2025, 11, 1646.

16. Wang, F.; Zhai, Z.; Zhao, Z.; Di, Y.; Chen, X. Physics-informed neural network for lithium-ion battery degradation stable modeling and prognosis. Nat. Commun. 2024, 15, 4332.

17. E, L.; Wang, J.; Yang, R.; Wang, C.; Li, H.; Xiong, R. A physics-informed neural network-based method for predicting degradation trajectories and remaining useful life of supercapacitors. Green. Energy. Intell. Transp. 2025, 4, 100291.

18. E, L.; Wang, J.; Sun, Y.; Shen, W.; Xiong, R. Physics-informed hybrid neural architecture for coupled degradation modeling and remaining useful life prediction of LiFePO4 batteries. J. Electrochem. Soc. 2025, 172, 060505.

19. Wen, P.; Ye, Z.; Li, Y.; Chen, S.; Xie, P.; Zhao, S. Physics-informed neural networks for prognostics and health management of lithium-ion batteries. IEEE. Trans. Intell. Veh. 2024, 9, 2276-89.

20. Lv, K.; Ma, Z.; Bao, C.; Liu, G. Indirect prediction of lithium-ion battery RUL based on CEEMDAN and CNN-BiGRU. Energies 2024, 17, 1704.

21. Xu, Q.; Wu, M.; Khoo, E.; Chen, Z.; Li, X. A hybrid ensemble deep learning approach for early prediction of battery remaining useful life. IEEE/CAA. J. Autom. Sinica. 2023, 10, 177-87.

22. Zheng, D.; Zhang, Y.; Guo, X.; Ning, Y.; Wei, R. Research on the remaining useful life prediction method for lithium-ion batteries based on feature engineering and CNN-BiGRU-AM model. Ionics 2025, 31, 5717-36.

23. Wang, C.; Jiang, W.; Yang, X.; Zhang, S. RUL prediction of rolling bearings based on a DCAE and CNN. Appl. Sci. 2021, 11, 11516.

24. Guo, L.; Li, N.; Jia, F.; Lei, Y.; Lin, J. A recurrent neural network based health indicator for remaining useful life prediction of bearings. Neurocomputing 2017, 240, 98-109.

25. Zhang, H.; Zhang, Q.; Shao, S.; Niu, T.; Yang, X. Attention-based LSTM network for rotatory machine remaining useful life prediction. IEEE. Access. 2020, 8, 132188-99.

26. Zeng, F.; Li, Y.; Jiang, Y.; Song, G. A deep attention residual neural network-based remaining useful life prediction of machinery. Measurement 2021, 181, 109642.

27. Tan, R.; Lu, X.; Cheng, M.; Li, J.; Huang, J.; Zhang, T. Forecasting battery degradation trajectory under domain shift with domain generalization. Energy. Storage. Mater. 2024, 72, 103725.

28. Wei, M.; Ye, M.; Wang, Q.; Xinxin-xu; Twajamahoro, J. P. Remaining useful life prediction of lithium-ion batteries based on stacked autoencoder and gaussian mixture regression. J. Energy. Storage. 2022, 47, 103558.

29. Xing, J.; Zhang, H.; Zhang, J. Remaining useful life prediction of - lithium batteries based on principal component analysis and improved Gaussian process regression. Int. J. Electrochem. Sci. 2023, 18, 100048.

30. Li, H.; Zhao, W.; Zhang, Y.; Zio, E. Remaining useful life prediction using multi-scale deep convolutional neural network. Appl. Soft. Comput. 2020, 89, 106113.

31. Wu, L.; Yoo, S.; Suzana, A. F.; et al. Three-dimensional coherent X-ray diffraction imaging via deep convolutional neural networks. npj. Comput. Mater. 2021, 7, 644.

32. Zhou, J.; Qin, Y.; Chen, D.; Liu, F.; Qian, Q. Remaining useful life prediction of bearings by a new reinforced memory GRU network. Adv. Eng. Inform. 2022, 53, 101682.

33. Gao, K.; Huang, Z.; Lyu, C.; Liu, C. Multi-scale prediction of remaining useful life of lithium-ion batteries based on variational mode decomposition and integrated machine learning. J. Energy. Storage. 2024, 99, 113372.

34. Ardeshiri R, Ma C. Multivariate gated recurrent unit for battery remaining useful life prediction: a deep learning approach. Int. J. Energy. Res. 2021, 45, 16633-48.

35. Wang, Z.; Liu, N.; Chen, C.; Guo, Y. Adaptive self-attention LSTM for RUL prediction of lithium-ion batteries. Inf. Sci. 2023, 635, 398-413.

36. Wang, F.; Amogne, Z. E.; Chou, J.; Tseng, C. Online remaining useful life prediction of lithium-ion batteries using bidirectional long short-term memory with attention mechanism. Energy 2022, 254, 124344.

37. Tian, Y.; Wen, J.; Yang, Y.; Shi, Y.; Zeng, J. State-of-health prediction of lithium-ion batteries based on CNN-BiLSTM-AM. Batteries 2022, 8, 155.

38. Wang, T.; Wang, K.; Pan, K. A Transformer-CNN-BiLSTM architecture for SOH prediction of battery storage systems. In 2024 IEEE 8th Conference on Energy Internet and Energy System Integration (EI2), Shenyang, China, November 29-December 2, 2024; IEEE: New York, USA, 2024; pp 4591-6.

39. Jin, Y.; Hou, L.; Chen, Y. A Time Series Transformer based method for the rotating machinery fault diagnosis. Neurocomputing 2022, 494, 379-95.

40. Zhang, Q.; Qin, C.; Zhang, Y.; Bao, F.; Zhang, C.; Liu, P. Transformer-based attention network for stock movement prediction. Expert. Syst. Appl. 2022, 202, 117239.

41. Hu, W.; Zhao, S. Remaining useful life prediction of lithium-ion batteries based on wavelet denoising and transformer neural network. Front. Energy. Res. 2022, 10, 969168.

42. Huang, X.; Tang, J.; Shen, Y. Long time series of ocean wave prediction based on PatchTST model. Ocean. Eng. 2024, 301, 117572.

43. Tang, P.; Qiu, Z.; Yao, Z.; et al. Lithium-ion battery RUL prediction based on optimized VMD-SSA-PatchTST algorithm. Sci. Rep. 2025, 15, 26824.

44. Li, X.; Zhang, L.; Wang, Z.; Dong, P. Remaining useful life prediction for lithium-ion batteries based on a hybrid model combining the long short-term memory and Elman neural networks. J. Energy. Storage. 2019, 21, 510-8.

45. Jiao, R.; Peng, K.; Dong, J. Remaining useful life prediction of lithium-ion batteries based on conditional variational autoencoders-particle filter. IEEE. Trans. Instrum. Meas. 2020, 69, 8831-43.

46. Ahwiadi, M.; Wang, W. An enhanced mutated particle filter technique for system state estimation and battery life prediction. IEEE. Trans. Instrum. Meas. 2019, 68, 923-35.

Cite This Article

How to Cite

Download Citation

Export Citation File:

Type of Import

Tips on Downloading Citation

Citation Manager File Format

Type of Import

Direct Import: When the Direct Import option is selected (the default state), a dialogue box will give you the option to Save or Open the downloaded citation data. Choosing Open will either launch your citation manager or give you a choice of applications with which to use the metadata. The Save option saves the file locally for later use.

Indirect Import: When the Indirect Import option is selected, the metadata is displayed and may be copied and pasted as needed.

About This Article

Copyright

Data & Comments

Data

Comments

Comments must be written in English. Spam, offensive content, impersonation, and private information will not be permitted. If any comment is reported and identified as inappropriate content by OAE staff, the comment will be removed without notice. If you have any queries or need any help, please contact us at [email protected].